Your AI Isn’t Wrong, It’s Alone: Why Model Disagreement Is the Real Enterprise Risk

Enterprises don’t have an AI accuracy problem.

They have a verification problem.

Artificial intelligence rarely fails loudly. It doesn’t crash. It doesn’t raise exceptions. It produces answers, fluently, confidently, and at scale.

That confidence is precisely the risk.

The greatest structural weakness in enterprise AI today is not model capability. It is model isolation. When a single system generates an output without comparison, without variance measurement, and without cross-validation, the organization inherits silent uncertainty.

Your AI isn’t wrong.

It’s alone.

Accuracy Is a Performance Metric. Agreement Is a Reliability Metric.

Most AI procurement decisions revolve around performance:

- Which model ranks highest?

- Which model scores best on benchmarks?

- Which model sounds the most fluent?

But enterprise environments are not benchmark environments.

A model can score 92% on standardized tests and still introduce unacceptable risk in:

- Regulatory filings

- Legal contracts

- Financial disclosures

- Multilingual compliance documentation

Why?

Because high average accuracy does not eliminate edge-case failure. And in enterprise systems, risk concentrates in edge cases.

A single output, no matter how fluent, contains no visibility into uncertainty.

A model cannot disagree with itself.

And without disagreement, there is no measurable uncertainty.

The Invisible Risk: Correlated Error

When organizations standardize on one model, they also standardize on its blind spots.

Every model has bias patterns.

Every model has domain weaknesses.

Every model updates over time.

Single-model deployment creates correlated error exposure, the systemic replication of the same misinterpretation across every output.

That is not a bug.

It is an architectural condition.

Reliability engineering in other industries solved this decades ago:

- Aviation uses redundant instrumentation.

- Distributed databases use quorum validation.

- Safety systems require cross-signal agreement.

AI systems, by contrast, are often treated as singular authorities.

That assumption is strategically fragile.

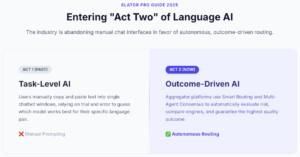

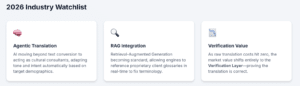

The Industry’s Shift: From Task-Level AI to Outcome-Driven AI

The language technology sector has entered what analysts describe as its “Act Two.”

According to the Slator Pro Guide: Translation AI (2025), the industry is moving beyond task-level AI, manually prompting a chatbot to translate a paragraph, toward outcome-driven language AI solutions.

This shift changes the central question.

Instead of asking:

“Which model should we use?”

Enterprises are beginning to ask:

“How do we guarantee the reliability of the outcome?”

This is where smart routing and agentic workflows emerge.

Rather than a human choosing between models, orchestration systems evaluate:

- Content risk profile

- Language pairing

- Domain sensitivity

- Historical variance patterns

Some platforms have begun embedding consensus mechanisms directly into their architecture. Through its SMART technology, MachineTranslation.com applies a consensus-based AI translation architecture that compares outputs from multiple engines before producing a final result. The emphasis is not on model competition, but on reducing single-model fragility through structured cross-validation.

This reflects a broader industry realization:

The problem is not choosing the smartest model.

The problem is designing systems that measure agreement before acting.

Source: Slator Pro Guide: Translation AI (2025) and MachineTranslation.com

Performance Is Abundant. Reliability Is Scarce.

We are entering a phase where model capability is increasingly commoditized.

Every major model can:

- Translate fluently

- Summarize coherently

- Generate structured outputs

Raw performance is no longer the differentiator.

Reliability architecture is.

Enterprise systems must shift from optimizing for single-shot excellence to designing for probabilistic alignment, the degree to which independent systems converge on the same interpretation.

Agreement increases confidence.

Disagreement exposes uncertainty.

Variance becomes a governance trigger.

Consensus is not redundancy.

It is a control system.

From Chat Interfaces to Reliability-Aware Systems

Early AI adoption centered around chat interfaces:

Employees copied content, selected a model, reviewed the output, and made manual judgments.

That is task-level AI.

Outcome-driven systems internalize those decisions.

A reliability-aware architecture does not simply generate an answer. It generates:

- An answer

- A confidence-weighted signal

- A measurable disagreement score

When outputs converge, automation proceeds.

When divergence exceeds threshold, escalation occurs.

Uncertainty becomes observable instead of invisible.

That shift, from assumption to measurement, is the foundation of enterprise AI governance.

The Real Enterprise Risk Is Silent Deviation

The most dangerous AI failures are not dramatic hallucinations.

They are subtle deviations:

- A softened compliance clause

- A misinterpreted regulatory nuance

- Terminology drift across multilingual filings

These are rarely obvious in isolation.

They become visible only in comparison.

A single model provides no internal comparator.

A consensus-based system exposes interpretive divergence before it becomes operational risk.

That is not feature enhancement.

It is structural risk mitigation.

The Strategic Reframe

The industry is obsessed with smarter models.

Enterprises should be obsessed with smarter systems.

The competitive advantage will not belong to organizations that deploy the most powerful individual model.

It will belong to those that architect verification into their workflows.

Because in enterprise environments:

Accuracy is assumed.

Verification is engineered.

Reliability is designed.

Your AI isn’t wrong.

It’s alone.

And in high-stakes systems, isolation is a risk.

Consensus is resilience.

AI and the Next Generation: Opportunities, Challenges, and Responsibilities

Your AI Isn’t Wrong, It’s Alone: Why Model Disagreement Is the Real Enterprise Risk

SEO for ChatGPT: Boost Your Brand in AI Responses