Software reviews are everywhere, but not all of them are useful. Some are written after a quick demo. Some are based only on feature lists. Others are shaped by affiliate commissions, brand reputation, or recycled opinions from other sites. That does not help someone trying to choose the right marketing tool for their business.

A proper software review should explain how the tool was tested, what it was tested against, and where it fits in real work. That matters even more with marketing software because the wrong choice can waste money, slow down campaigns, or lock a team into workflows that become difficult to fix later.

A good review method should not just ask, ‘Does this tool have many features?’ It should ask better questions. Does the tool make common tasks faster? Is it easy to set up? Does the reporting make sense? Can a small business use it without a full technical team? Does it still perform well after the first impression wears off?

That is where a clear testing process makes the review far more useful.

What a Software Review Methodology Should Cover

A software review methodology is the system used to test, score, and explain a tool. It should give readers enough detail to understand how the final opinion was reached.

For marketing software, this usually means looking at setup, usability, features, integrations, pricing, support, reporting, and long-term use. The review should also consider the type of user the software is built for. A tool that works well for a large agency may be far too complex for a solo consultant. A simple email platform may suit a small business but feel limited for a team running advanced automation.

The best reviews make that distinction clear.

A review should also separate facts from judgment. For example, ‘The platform includes email automation, landing pages, and CRM tools’ is a fact. ‘The automation builder feels slow when building multi-step campaigns’ is a judgment based on use. Both can be useful, but readers need to know where each one comes from.

For a clear breakdown of this process, this software review methodology explained guide shows how testing can be structured so readers know what is being assessed and why.

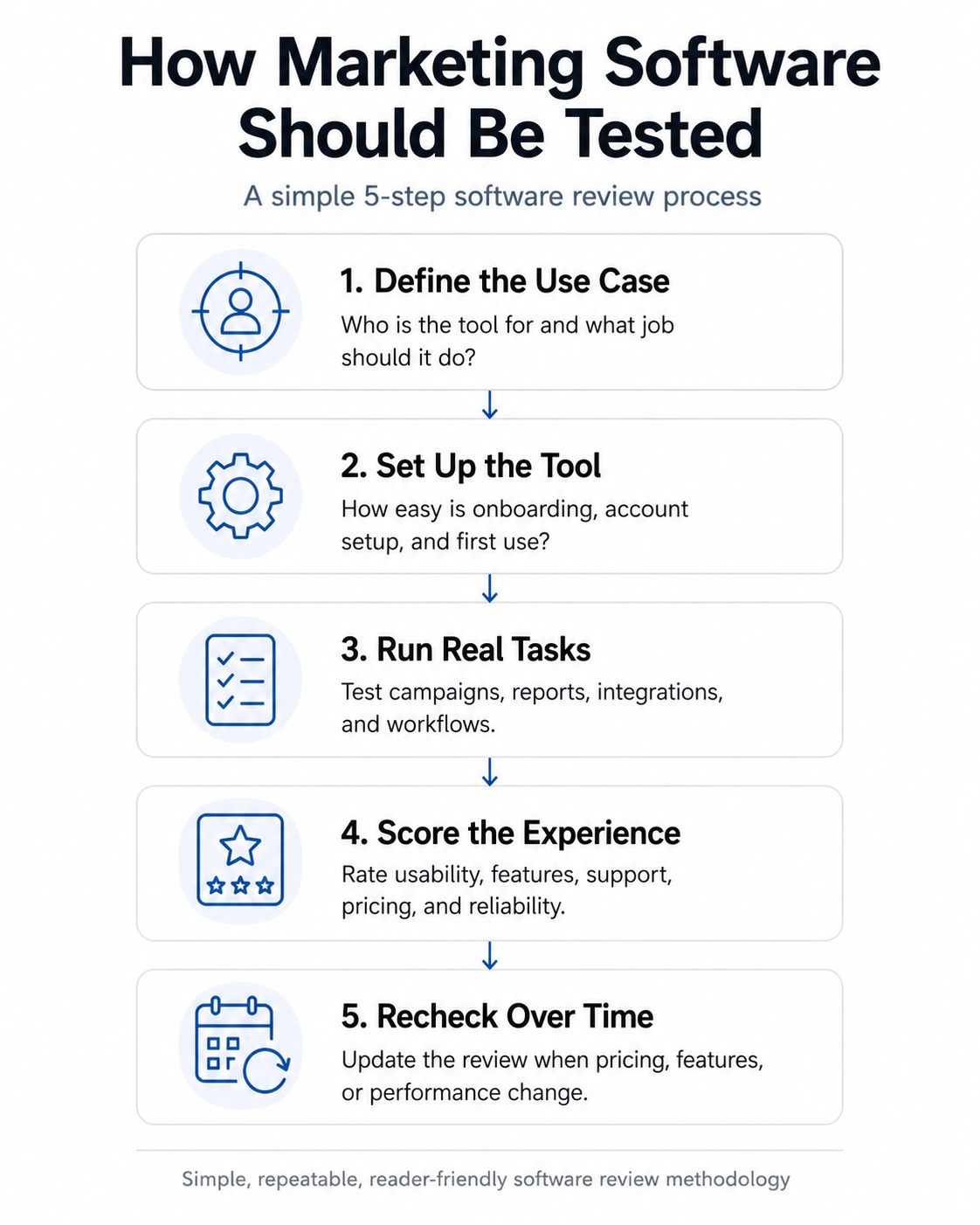

The 5-Step Software Testing Loop

A reliable software review follows a repeatable process, not a quick first impression. This simple loop shows how each tool can be tested from the initial use case through to long-term updates.

Key Areas to Test in Marketing Software

| Testing Area | What to Check | Why It Matters |

| Setup and onboarding | Account creation, dashboard clarity, setup guides and first campaign steps | A confusing start can slow teams down before they even launch work |

| Ease of use | Navigation, editing tools, templates, drag-and-drop builders | Good software should reduce effort, not create more admin |

| Features | Automation, analytics, CRM, email, landing pages, AI tools, integrations | The tool needs to match the user’s actual workload |

| Performance | Page speed, email sending, reporting delays, bugs, uptime | Slow or unreliable tools can damage campaigns |

| Integrations | CRM, analytics, payment tools, ad platforms, CMS connections | Marketing tools rarely work alone |

| Pricing | Free trials, hidden limits, upgrade costs, contract terms | Cheap entry plans can become expensive fast |

| Support | Live chat, email help, knowledge base, response times | Poor support becomes a real issue when campaigns are live |

| Reporting | Data clarity, export options, attribution, dashboard quality | Bad reporting leads to bad decisions |

Why Real-World Testing Beats Feature Lists

Feature lists are easy to copy. Real testing is harder.

A platform may claim to include automation, AI writing tools, audience segmentation, and analytics, but that does not mean those features work well. A review should test how those tools behave during normal use.

For example, an email marketing tool should not only be judged on whether it has automation. It should be tested by building a real automation flow. Can you create conditions easily? Can you segment users based on behaviour? Are reports easy to read after the campaign runs? Does the editor break when you switch between desktop and mobile previews?

The same applies to SEO tools, social media schedulers, CRM platforms, chatbot builders, and analytics software. A tool can look impressive on the sales page but feel awkward after two days of use.

That gap between promise and practical use is exactly what a review should uncover.

Matching the Software to the Right User

No software is right for everyone. That is why reviews should explain who each tool suits best.

A small business owner may need simple templates, clear pricing, and fast setup. An agency may prioritise client accounts, white-label reporting, workflow approvals, and team permissions. A growth marketer may want advanced tracking, A/B testing, and detailed attribution.

A fair review should not punish a simple tool for failing to act like an enterprise system. It should judge whether the tool does the job it claims to do for its target audience.

That also makes recommendations more honest. Instead of saying, “This is the best email marketing platform,” a useful review might say, “This is best for small businesses that want quick setup and simple automation, but it is not ideal for advanced segmentation.”

That kind of detail helps readers make better decisions.

Pricing Needs More Than a Quick Mention

Pricing is one of the most important parts of a software review, but it is often handled badly.

The monthly headline price rarely tells the full story. Many marketing platforms limit contacts, users, reports, automation steps, data history, AI credits, or integrations by plan. A tool that looks affordable at first can become expensive once a business grows.

A proper review should look at what each plan actually includes. It should also flag hidden issues such as annual-only pricing, steep upgrade jumps, limited free trials, cancellation rules, and support restrictions.

For marketing teams, the key question is not simply “How much does it cost?” The better question is, “At what point will this tool become too limited or too expensive for the work I need it to do?”

Support and Documentation Matter More Than People Think

Support is easy to ignore during a first test, but it becomes important when something breaks.

Marketing software often sits at the centre of important business activities. If an email campaign fails, a CRM sync breaks, or a tracking script stops firing, users need fast help. A review should test or at least inspect the available support channels.

Good documentation also matters. Some tools have excellent knowledge bases with screenshots, walkthroughs, and troubleshooting steps. Others rely on thin FAQ pages that do not answer real questions.

A review should assess whether a typical user can resolve problems without needing a developer or an account manager.

Reviews Should Be Updated, Not Left to Decay

Marketing software changes all the time. Pricing changes. AI features are added. Old tools are removed. Interfaces are redesigned. Support quality can improve or decline.

That means a review written once and left untouched can become misleading.

A strong methodology should include periodic updates. The reviewer should revisit major tools when there are product changes, pricing updates, new integrations, or repeated user complaints. This is especially important for software categories moving quickly, such as AI marketing tools, automation platforms, and analytics products.

Readers do not just need a snapshot. They need a review that still reflects the current product.

The Final Test – Would You Trust the Review Yourself?

A proper software review should feel useful, fair, and grounded in actual use. It should explain what was tested, how it was tested, and who the tool is really for.

The best reviews do not try to make every product sound perfect. They point out trade-offs. They explain weak spots. They show where the software performs well and where it may cause frustration.

That is what makes a methodology important. It turns a loose opinion into a practical guide. When readers can see the process behind the verdict, they are more likely to trust the result.

Better Reviews Start With Better Testing

A software review is only as good as the method behind it. If the testing is shallow, the article will be shallow too. If the reviewer uses the tool properly, checks the right details, and explains the process clearly, the final review becomes far more useful.

Marketing software can affect budgets, workflows, reporting, and customer communication. That makes careful testing worth the effort. A clear review method helps readers avoid poor-fit tools and choose software that matches the way they actually work.

Sheet Metal Bracket: Basics, Types, Process, & Materials

Guide to Pre-Lubricated Switches for Backlit Gaming Keyboards

How Telegram Helps Online Communities Grow