The first time I worked with an AI Music Generator, what surprised me was not how quickly it produced a track, but how it reframed my role in the process. I was no longer “making” music in the traditional sense. Instead, I was choosing between possibilities—guiding outcomes rather than constructing them step by step.

That subtle shift exposes a deeper change in creative workflows. For years, music production has been defined by execution: knowing how to build, layer, and refine sound. Now, the emphasis seems to be moving toward selection—deciding which generated result best represents an idea. This change does not eliminate creativity; it relocates it.

From Building Music To Curating Possibilities

Traditional workflows revolve around assembling elements:

- Writing melodies

- Arranging instruments

- Mixing and mastering

Each step requires both time and expertise. In contrast, AI-driven systems generate complete outputs instantly, which introduces a new kind of workflow.

Generation As A Starting Point Rather Than An End Goal

Instead of building from scratch, users:

- Generate multiple versions

- Compare variations

- Select or refine

In my testing, I found that the first output is rarely the final one. The value lies in the range of possibilities produced.

Creative Judgment Becomes The Core Skill

Because outputs are generated automatically, the user’s role shifts to:

- Evaluating tone

- Assessing emotional fit

- Choosing direction

This resembles editing more than composing.

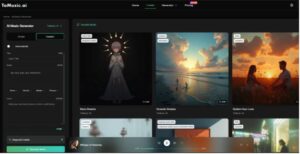

How The System Produces Variation By Design

One of the defining characteristics of these systems is variability. Even with identical inputs, results differ.

Probabilistic Output Rather Than Deterministic Results

Unlike traditional tools, which produce consistent outputs for the same input, AI systems introduce randomness:

- Slight changes in melody

- Different arrangement structures

- Variation in vocal delivery

This variability is not a flaw—it is a feature.

Why Variation Accelerates Creative Exploration

Because each generation is unique:

- Users can explore multiple directions quickly

- Ideas evolve through comparison

- Unexpected results can inspire new concepts

This reduces the cost of experimentation.

How Lyrics Transform The Selection Process

When lyrics are introduced, the dynamic changes again.

Narrative Anchors Limit Randomness

Using Lyrics to Music AI, I noticed that outputs become more consistent. The presence of structured text acts as a constraint:

- Melody aligns with phrasing

- Emotional tone stabilizes

- Structure becomes more predictable

Selection Focuses On Delivery Rather Than Composition

Instead of choosing between entirely different songs, users evaluate:

- Vocal tone

- Interpretation of lyrics

- Emotional nuance

This shifts attention from structure to performance.

The Actual Workflow As A Decision Loop

Although the interface appears simple, the workflow is iterative and decision-driven.

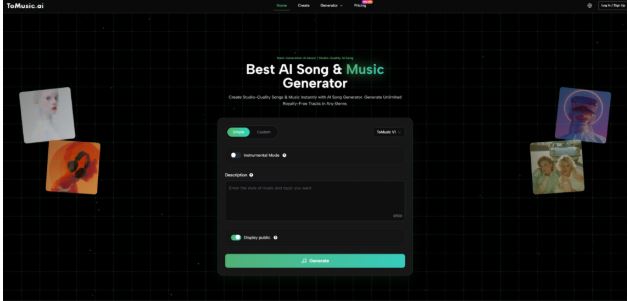

Step One Define Intent Through Description Or Lyrics

Users provide:

- A descriptive prompt

- Or structured lyrics

This establishes the initial direction.

Step Two Choose Generation Parameters

Options include:

- Style or genre

- Vocal presence

- Level of control

These parameters influence the range of possible outputs.

Step Three Generate Multiple Versions And Compare

Users typically:

- Generate several variations

- Listen and evaluate

- Refine input based on results

This loop may repeat multiple times.

Comparing Selection Driven And Construction Driven Workflows

The difference between these approaches is fundamental.

| Dimension | Construction Workflow | Selection Workflow |

| Primary Activity | Building elements | Choosing outcomes |

| Time Allocation | Production heavy | Evaluation heavy |

| Iteration Cost | High | Low |

| Skill Focus | Technical execution | Creative judgment |

| Output Predictability | High | Variable |

This comparison highlights a shift from control to exploration.

Where Selection Based Creation Has The Most Impact

The benefits of this model depend on context.

High Volume Content Production

For creators producing frequent content:

- Speed matters more than precision

- Variation is an advantage

This aligns well with short-form media environments.

Concept Testing And Ideation

For early-stage projects:

- Multiple ideas can be explored quickly

- Weak concepts can be discarded early

This reduces wasted effort.

Non Technical Creative Workflows

For users without production experience:

- Barriers are significantly lower

- Creative participation becomes more accessible

Limitations Of A Selection Focused Approach

Despite its advantages, this model introduces new challenges.

Loss Of Fine Grained Control

Users cannot:

- Adjust individual notes

- Modify specific layers

This limits precision.

Decision Fatigue From Too Many Options

Generating multiple versions can lead to:

- Difficulty choosing

- Overanalysis

In some cases, too much choice becomes a constraint.

Dependence On Prompt Quality

Because outputs vary widely:

- Poor prompts lead to inconsistent results

- Clear articulation becomes critical

Why This Reflects A Broader Shift In Creative Systems

This transition is not limited to music.

From Execution Tools To Generative Systems

Across creative fields:

- Tools are moving toward generation

- Users guide rather than build

This changes how creativity is expressed.

Curation As A Core Creative Skill

As generation becomes easier:

- Selection becomes more important

- Taste and judgment gain value

This redefines what it means to create.

How Creators May Adapt To This Shift

Rather than replacing traditional workflows, this model may complement them.

Hybrid Workflows Combining Generation And Editing

Creators may:

- Generate ideas quickly

- Refine them using traditional tools

This combines speed with precision.

Developing New Evaluation Frameworks

As options increase, creators need:

- Criteria for selection

- Methods for comparison

This introduces new forms of creative discipline.

Why The Real Transformation Is Subtle But Significant

At first glance, the system appears to automate music creation. But in practice, it changes something deeper: how decisions are made.

Instead of asking “how do I build this,” creators begin to ask “which version best represents my idea.” That shift—from execution to selection—may ultimately define the next stage of creative workflows.

Five Advanced Ways to Use AI Video Generators

How to Build an App with AI in 2026 (Step-by-Step Guide)

Sheet Metal Bracket: Basics, Types, Process, & Materials